AI GTM Maturity Explained: What Stages 1-2 Actually Look Like in Real Companies

Becca Eddleman

Most teams think they’re further along in AI adoption than they actually are. They’ve got ChatGPT in the hands of reps, maybe even a Gong summary showing up in Slack. And that’s supposed to signal “maturity.” But AI GTM maturity is about behavior, alignment, and readiness.

Early-stage maturity is about proving your org can use AI consistently, intentionally, and cross-functionally. Until that happens, AI and GenAI remain another experiment, not an advantage.

In this article, we’ll explore:

-

- The Wild West and emerging assistants in real GTM orgs

- The visible behaviors and signals that define each stage

- Why most teams stall between these early stages and how to start moving forward

We’re zooming in on the earliest stages because this is where most GTM orgs are stuck today. They lack the systems and behaviors to make AI work at scale.

Understanding what these stages look like in practice is the first step to moving forward.

Related Content:

AI GTM Strategy Playbook for GTM Leaders - We Call it PLAN

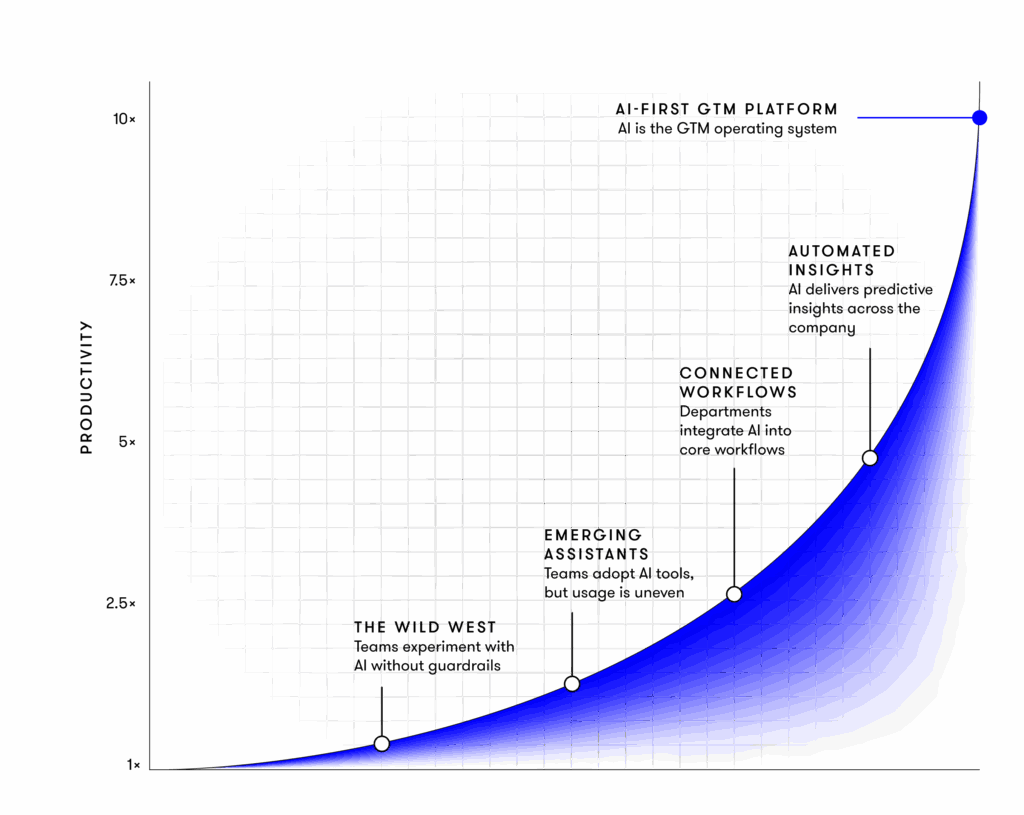

The AI GTM Maturity Curve

Before we dive into Stages 1 and 2, let’s ground ourselves in the full maturity model. The AI GTM Maturity Curve is built specifically for go-to-market leaders navigating the shift from AI experimentation to orchestration.

Why Maturity Models Matter for GTM, Not Just IT

Most maturity models focus on tooling or technical infrastructure. But for GTM teams, AI maturity is about something different: can your team consistently apply AI across workflows and functions to drive repeatable outcomes?

That’s what Skaled’s AI GTM Maturity Curve measures. It looks at how much AI you’re using andhow aligned your organization is in using it effectively.

The 5 Stages of AI GTM Maturity

-

- Stage 1: Wild West – Ad-hoc experiments with AI tools, no governance or repeatability.

- Stage 2: Emerging Assistants – Team-level adoption, uneven usage, early wins, but disconnected systems.

- Stage 3: Connected Workflows – Department-level maturity, workflows integrated into CRM/BI with guardrails.

- Stage 4: Automated Insights – Company-wide intelligence, AI workflows deliver proactive insights.

- Stage 5: AI-First GTM Platform – Enterprise-level orchestration, unified AI platform, and culture.

For a full breakdown of each stage, see: AI GTM Maturity Curve

Stage 1 – The Wild West (Ad-Hoc AI Experiments)

Welcome to Stage 1. This is where most GTM teams think they’re “doing AI,” but in reality, they’re stuck in scattered experimentation.

There may be energy and curiosity. But without alignment, governance, or enablement, these efforts rarely deliver anything beyond a few Slack-worthy wins.

How to Spot Stage 1 in Your GTM Org

-

- AI usage is siloed, usually driven by a few Sales or SDR team members testing tools on their own.

- No enablement strategy exists. Reps might be using ChatGPT, but there’s no centralized training, documentation, or accountability.

- RevOps and leadership have little visibility into how AI is being used or why.

- Tool examples: ChatGPT prompts written in isolation, Gong summaries getting dropped into Slack, uncoordinated AI pilots with no plan to scale.

There’s no shared language across teams. No standards. No measurement. No repeatability.

This is the “figure it out” era, and it shows.

What’s Missing

-

- No roadmap. AI adoption isn’t tied to GTM objectives or buyer journeys.

- No prompt governance. Teams write and reuse whatever seems helpful, with no quality control.

- No metrics. There’s no way to know what’s working or why just anecdotal feedback.

A widely cited MIT‑linked figure suggests roughly 95% of generative AI pilot programs in SaaS/GTM contexts fail to deliver their promised ROI, often due to uncoordinated experiments and weak problem‑solution fit. So don’t worry, if you’re in Stage 1, you’re definitely not alone. However, 2026 is going to be a transformational year, and you don’t want to stay in scattered experiments for long.

Related Content:

The Hidden Cost of Poor AI GTM Prioritization

Stage 2 – Emerging Assistants (Team-Level Adoption)

Stage 2 is where things start to click, at least on the surface. Some teams are getting results. A shared vocabulary is emerging. There’s a growing belief that AI can be more than a side project.

But while the progress is real, it’s still inconsistent and rarely connected across the GTM engine.

The Behavioral Shifts that Define Stage 2

-

- Team-level adoption starts to take hold. Sales, SDR, or Enablement teams begin to build repeatable AI habits.

- A shared language forms. Phrases like “reprompt this,” “use the call summary,” or “check the assistant” start showing up across Slack or training decks.

- Usage is uneven. Some reps go deep. Others barely touch it. AI is showing promise, but it’s still optional and often unsupported.

- Early wins spark confidence, but without RevOps or leadership support, scaling becomes a challenge.

This is where many companies find themselves today: AI is being used in pockets, but it hasn’t translated into consistent, org-wide adoption. In fact, while roughly two-thirds of organizations report regular use of generative AI in at least some parts of the business, only about one-third say they’re actually scaling it across the organization.

AI Starts to Show Up in Enablement and Workflows

-

- Prompt libraries begin to emerge, often Google Docs or Notion pages shared across teams.

- Training initiatives get rolled out, typically by Enablement, to show reps how to get value from AI.

- AI Assistants (via tools like ChatGPT GPTs, Gemini Gems, Copilots) are in use, but they aren’t yet integrated into systems like the CRM or sales engagement platform.

The org is making progress, but the maturity is still fragile.

Emerging KPIs and Signals

-

- Productivity gains begin to show up anecdotally, like faster email writing, quicker research, and shorter ramp time.

- Some conversion metrics improve, especially in SDR or follow-up workflows.

- Early AI governance begins to surface, often as informal guidance or checklists.

- There’s intentional experimentation, but it’s not yet standardized or enforced.

Related Content:

AI GTM Engineer

Moving from the Wild West to Emerging Assistants: Where Most Teams Get Stuck

The leap from Stage 1 to Stage 2 is often underestimated. On paper, it looks like a small step: move from scattered experimentation to something a bit more coordinated. But in practice, this is where most teams stall, not because AI lacks potential, but because the organization lacks the structure to support it.

Here’s why that jump breaks down.

Misalignment Between GTM and AI Investment

Sales teams may be experimenting with tools. Enablement might even spin up some trainings. But without RevOps or leadership support, those efforts remain isolated. There’s no cross-functional alignment on why AI is being used or how success will be measured. AI remains a series of disconnected side projects.

Focusing on Tools Before Strategy

It’s easy to chase tools. The GenAI landscape moves fast, and every week there’s a new AI assistant or CRM plugin promising time savings or better messaging. But when teams lead with tools instead of strategy, adoption fizzles. There’s no consistency, no training, no feedback loops just short-lived excitement followed by abandonment.

Underestimating Data Hygiene and Workflow Design

Even when the intent is there, messy workflows bring everything to a halt. The AI can’t deliver value if reps don’t follow consistent processes or if fields are incomplete. You can’t scale what isn’t structured, and most GTM teams aren’t yet set up to make that leap.

This is more because of a lack of coordination than a lack of interest.

In fact, integration remains one of the biggest barriers: 28% of GTM leaders report difficulty folding AI into existing systems. Nearly as many point to skills gaps (29%) or organizational resistance to change (28%). These are structural problems.

To actually progress, teams need more than experimentation. They need support standing up the workflows, governance, and cross-functional alignment that make AI stick.

That’s where a dedicated AI GTM owner makes the difference. You wantsomeone who can build the connective tissue between tools, training, and outcomes. Someone who rolls out techand operationalizes it.

👉 Learn how Skaled helps GTM teams bridge this gap with AI GTM Engineer support.

How to Diagnose Your Stage (Simple Checklist)

Not sure where your team really stands on the AI GTM Maturity Curve? Let’s check.

| Diagnostic Question | Stage 1: Wild West | Stage 2: Emerging Assistants |

| Who owns AI decisions? | Individual reps or managers running isolated tests | Team leads or enablement teams introducing shared usage |

| Are AI Assistants and best practices being shared? | No; usage is fragmented and undocumented | Yes; shared libraries or workflows begin to form |

| Are AI Assistants operationalized across workflows? | Not at all; tools are used sporadically | Some assistants are integrated into team workflows |

| Are reps trained on how/when to use AI? | No; usage is optional and self-guided | Some informal enablement is in place; usage is encouraged |

| Are you tracking productivity changes? | No; there’s no measurement framework | Early productivity lift observed, often anecdotal |

| Are you tracking conversion metric changes? | No; impact is not tied to revenue outcomes | Some early wins in top-of-funnel or follow-up conversions |

If your answers lean mostly left, you’re still in the Wild West and likely burning cycles on AI without real returns. If you’re mostly right, you’re stepping into Emerging Assistants territory but still need structure to scale.

You Can’t Scale What You Can’t Name

Too many GTM teams are trying to “scale AI” without first understanding where they actually are. They chase tooling before alignment. They launch pilots before defining ownership. They ask for ROI before they’ve even created repeatability.

Here’s the truth: AI fails because the organization isn’t ready.

Maturity starts with naming where you are. If you’re still in the Wild West, own it and start putting structure in place. If you’re seeing signs of Emerging Assistants, don’t stop there. Build the systems, training, and visibility to go further.

Build the internal alignment and readiness that unlock real results.

Ready to assess where your GTM org stands and start making real progress? Book a meeting with Skaled below.